As the demand for intelligent perception and edge computing continuestorise, conventional vision systems based on CMOS sensors are encounteringbottlenecks in processing efficiency and energy consumption. The physical separation of sensing, storage, and computing units requires additional modules, increasing hardware complexity, latency, and power consumption.To address these limitations,in-sensor neuromorphic computing architectureswhichintegratethefunctionsofsensing and preliminary processingat the sensor level to enable local, real-time preprocessing of input data such as imageshavegradually emerged.Among them, neurons and synaptic devices are key units for information encoding.Within the frameworkofspiking neural networks (SNNs), leaky integrate-and-fire (LIF) neurons stand out for theirsimplicity, hardware compatibility, and ability to support flexible, sparse, event-driven spike coding.However, constrained by device architecture and materiallimitations,existingoptoelectronic LIFneuronsstruggleto fullyemulate the dynamic behavior of biological neurons.Neuron devicestypicallyexhibitshort-term dynamic behaviors,whreeassynapse devices aredesignedforlong-term plasticityandnon-volatileweightstorage.Thus, integrating volatile optical sensing with non-volatile weight storage remains a critical challenge for neuromorphic architectures, as conventional heterogeneous approaches are constrained by material and process incompatibilities, increasing integration complexity and fabrication costs.

Addressing the aforementioned issues, Professor Yuchao Yang and Researcher YaoyuTao’s groupfrom Peking University, in collaboration with Professor Xiaoxian Zhang and Professor Yongsheng Wang’s groupfrom Beijing Jiaotong University,have explored a homogeneous integration solution, leveraging the excellent photoelectric response and integrability of the two-dimensional material MoS2, as well as the non-volatile storage characteristics of HZO ferroelectric materials.At the device level, the teamdesigned an optoelectronic LIF neuron based on a MoS2phototransistor (PT).By introducing a photogenerated charge trapping-detrapping mechanism and a short-circuit discharge pathway,the device emulates key neuronal features: multispectral optical sensing, capacitor-less membrane potential integration, threshold-triggered spiking with automatic reset, and intrinsic stochasticity.In parallel,theydevelopedMoS2ferroelectric field-effect transistors(FeFETs)as artificial synapses, featuring atunable memory window and reliable multilevel storage.

Figure1.MoS₂-basedhomogeneousintegrationplatformforvisualneuromorphic computing

At the system verification level,the research team achieved homogeneous integration of neurons and synaptic devices, combined the neural circuit with the MoS₂ device arraytoconstructan in-sensor computing architecture foroptoelectronicSNNs. In this system, the optical signal is sensed by the phototransistor (PT) array, transformed into spike trains by photoelectronic neurons and put into the ferroelectric synapse (FeFET) array to perform parallel multiply-accumulate (MAC) operations,achievingend-to-end neuromorphic processing.The simulation accuracy of the RGB color classification task based on thissystemreached 91.7%, verifying its application potential in visual perception computing.

Figure2.Co-integrated MoS2-based neuron-synapse array for optoelectronicspiking neural networks (SNNs)

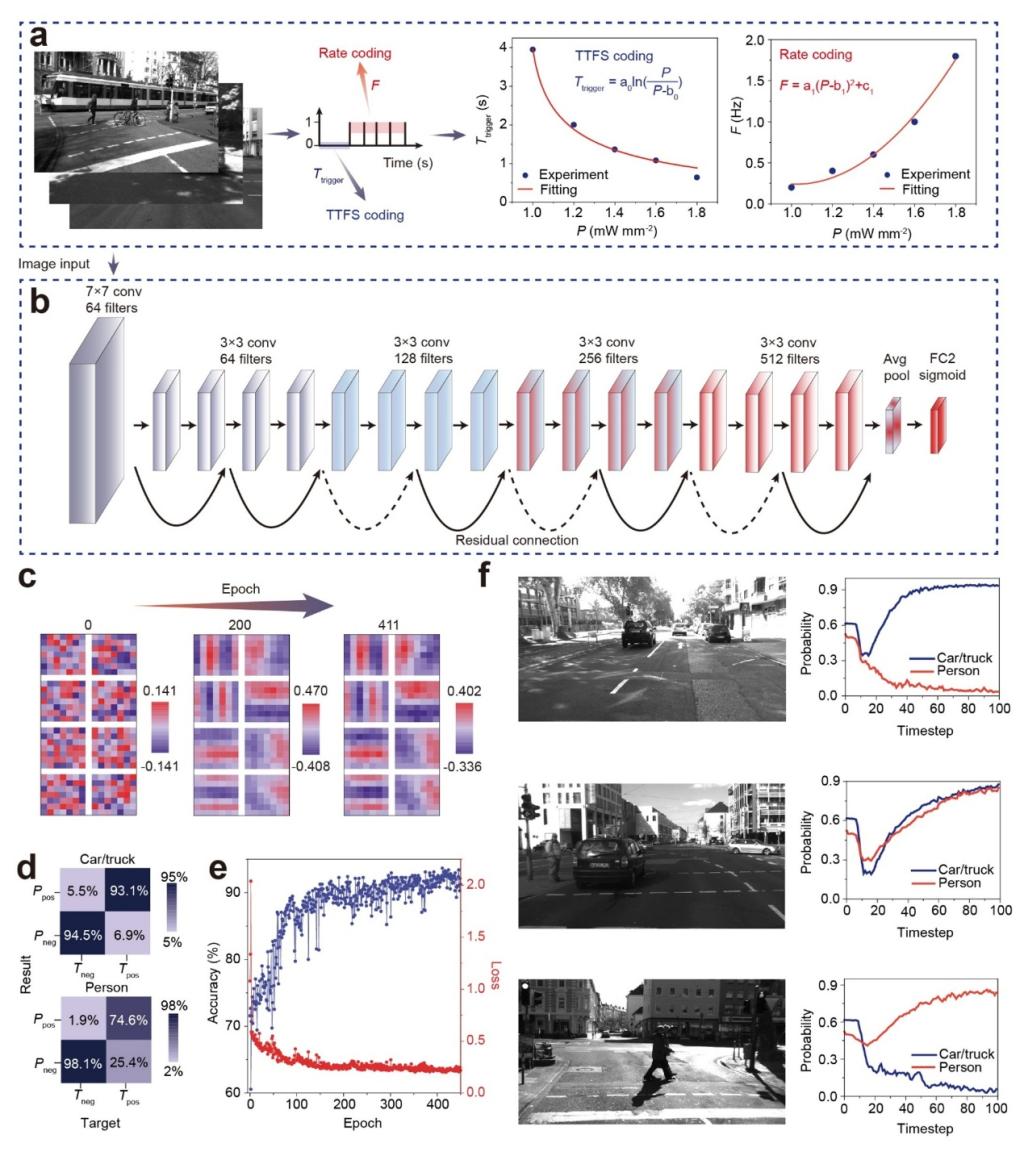

Beyond color detection, the integrated MoS2systemwasdeployed formore complextasks, such asmachine vision in assisted driving.In a noisy road environment,acquired road scene images are encoded into spikes by the bio-inspiredoptoelectronic LIF neurons.Ahybrid strategy combining the time-to-first-spike (TTFS) and rate encodingwasadoptedtobalancerapid response and robustness.The encoded spikes were fed into the spiking neural networks to complete feature extraction and classification.Ultimately, the system achieved a target detection accuracy rate of 93.5% on the test set, indicating that thissystemhas the capability to handle real and complex scenarios.

Theseresultsofferan innovative design paradigm for constructing high-performance and scalable visual neuromorphic systems.

Figure3.Object detection in assisted driving scenarios using optoelectronic SNN

The relevant results were published inNature Communicationsunder the title“Homogeneous integration of two-dimensional material-based optoelectronic neurons and ferroelectric synapses for neuromorphic vision”.JiarongWang(a doctoral candidate jointly trained by Peking University and the School of Physical Sciencesand Engineering,Beijing Jiaotong University), KeqinLiu(a postdoctoral researcher at Peking University), andPek Jun Tiw(a doctoral candidate at Peking University), are the co-first authors of this paper. Professor Yuchao Yang and Researcher Yaoyu Tao from the School ofElectronic and ComputerEngineering, Peking University, and Professor Xiaoxian Zhang and Professor Yongsheng Wang from the School of Physical Sciencesand Engineering, Beijing Jiaotong University, are the corresponding authors. This work was supported by the New Cornerstone Science Foundation, the National Key R&D Program, the National Natural Science Foundation of China, the Guangdong Provincial Key Laboratory of In-Memory Computing Chips, the Shenzhen Science and Technology Program, and the Beijing NaturalScience Foundation.

ArticleLinks:https://www.nature.com/articles/s41467-026-68905-3?utm_source=rct_congratemailt&utm_medium=email&utm_campaign=oa_20260318&utm_content=10.1038/s41467-026-68905-3